In today’s digital age, a new kind of deception is quietly but steadily becoming a very real threat. It’s called a deepfake and if you’re an executive, a professional or an older adult, it’s one scam you absolutely need to know about.

This article explains what is deepfake, whether deepfake is legal, how scammers use tools like DeepFaceLab, and most importantly: how you can detect and prevent deepfake fraud.

1. What Is a Deepfake?

A deepfake is a video, image or audio clip that’s been altered using artificial-intelligence tools so that it looks and/or sounds like someone did or said something they never actually did. It comes from the words “deep learning” (an AI method) and “fake”.

In practice:

- A face from Person A is swapped into a video of Person B, so it appears Person A said or did something they didn’t.

- A voice recording is manipulated to make it ‘sound’ like someone you know (or a company’s executive) giving instructions.

- A photo is altered so that you appear somewhere you never were, or saying something you never said.

Many deepfake tools are now freely available. For example, projects like DeepFaceLab allow hobbyists to experiment with face-swapping. That means the barrier to entry for scammers is much lower.

Why does this matter? Because it means scammers can impersonate trusted people (bosses, family members, officials) in ways that feel real and that makes their frauds more believable.

2. Why Deepfake Scams Are Growing (and the Losses Are Real)

i) Financial Toll

Deepfake-enabled fraud is already costing businesses and individuals big money:

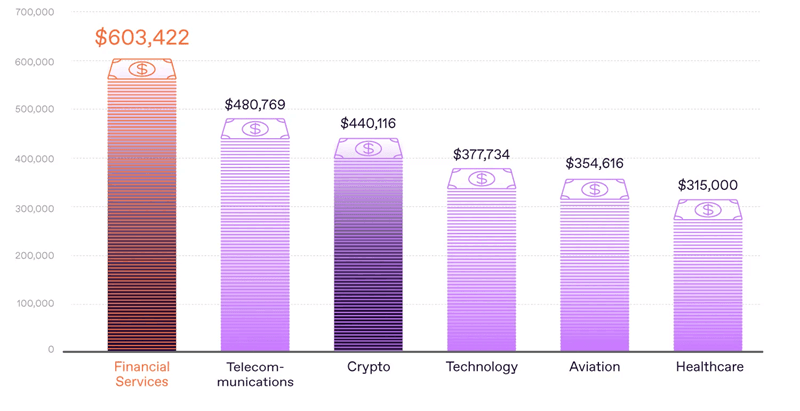

- In Q1 of 2025 alone, deepfake-driven fraud caused more than US $200 million in losses.

- A report by IRONSCALES found that on average organisations that suffered deepfake incidents lost around US $280,000 each.

- Globally, as cheap AI-tools proliferate, IBM estimates show losses could exceed US $1 trillion in the near future.

Deepfake on financial lost based on industries in 2025. Source: Regulaforensics

ii) Why Executives, Professionals & Seniors Are At Risk

Executives/Professionals: Deepfakes can be used in “CEO fraud” or business email scams, where “the CEO” via AI-voice or video instructs a finance team to make a transfer.

Older adults: Scammers impersonate grandchildren or family members with audio or video messages saying they’re in urgent trouble and ask for money now.

A recent study found older people are particularly vulnerable because traditional scam cues (bad spelling, obvious fake ID) may not apply compared to this deepfake scams.

Everyone: The key advantage for scammers is ‘trust’. If you believe the voice or face belongs to someone you know or respect, you’re less likely to question.

iii) Legal Uncertainty

Is deepfake legal? The short answer: it’s complicated.

Creating a deepfake per se isn’t always illegal but using it to impersonate, defraud, distribute non-consensual imagery, or commit other harms often crosses the line of law.

For example:

In Malaysia and other places, experts are calling for stronger laws because deepfakes complicate standard evidence, attribution and prosecution.

Several countries are now passing laws designed to regulate or criminalise certain uses of deepfakes (e.g., non-consensual imagery, impersonation).

3. How Do Scammers Use Deepfakes?

Here are some of the scam scenarios you should know:

i) Voice-Cloning & Impersonation

A scammer records a snippet of a senior executive’s, head of department, senior leader voice (or uses AI), clones the voice, then calls a staff member pretending to be that person, instructing them to transfer funds. Because the voice sounds real, then the target acts.

ii) Video or Face-Swap Scams

A scammer uses DeepFaceLab or other tools to put a trusted face into a convincing video maybe a family member or trusted official. They then ask the target to act quickly or risk “serious consequences.”

iii) Deepfake in Social Engineering

Scammers use deepfakes to create false urgency, for example “Your account is involved in money laundering; speak to this ‘officer’ now” (deepfake video or call). The victim is told to transfer money or buy assets like gold or crypto immediately.

Read: Gold Scam: Woman Loses S$412,000 in Gold After Falling for Fake Government Officials

iv) Targeting Older Adults via Emotional Deepfakes

Scammers may pretend to be a grandchild in trouble via a deepfake voice-call: “Grandma, I’m stuck overseas, need money now.” The older adult believes the voice and acts before verifying.

4. How to Detect Deepfakes? Here the Simple Tips for Everyone

Even non-tech people can pick up warning signs. Here’s what to look out for:

| Warning Sign | What You Might See or Hear |

|---|---|

| Strange lighting, shadows or unnatural facial movement. | The face might flicker, skin tone may shift slightly, eye-blinking or lip movements seem off. |

| Audio/Video mismatch | The lips don’t sync with speech; the voice sounds a little “tinny” or robotic. |

| Unusual request with urgency | “Transfer now or everything is lost”. This pressure is classic scam behavior. |

| The person is unreachable via normal channels | If “your boss” calls but doesn’t go through official internal lines, be skeptical. |

| Unexpected medium or device | A known official uses WhatsApp video call and asks you to install unusual apps or send QR codes. It’s odd for normal business. |

You can also use reverse image search, check official channels, or verify the person’s identity via known contacts.

5. How to Prevent Deepfake Scams? Protect Yourself and Your Organisation

i) For Executives & Professionals

- Establish verification protocols: If a “CEO” calls someone and asks for money or asset transfer, always verify via a second channel (e.g., face-to-face, known email or internal system).

- Ensure finance teams are trained about deepfake threats and audio/video scams.

- Use two-factor verification, hold procedures requiring more than one sign-off for large transfers.

- Limit exposure of executive voice-recordings online and anonymity sensitive media that could be used to train deepfake models.

- Invest in tools that detect deepfake videos/voices (especially important for large organisations). Reports show many organisations are aware of the risk but haven’t yet invested enough.

ii) For Older Adults & Families

- Never act on a phone/video message alone that demands money or asset transfer: if it’s a “family emergency,” call a known relative directly.

- Ask questions that only the genuine person would know (“Please describe the name of the restaurant we visited last month”).

- Use trusted contacts (children, other family) to help if you’re unsure. Encourage “pause and verify”.

- Avoid installing apps on someone else’s request without checking what they are and why.

- Share awareness: talk to elder family members about deepfake scams so they recognize them when they happen.

iii) For Everyone

- Be sceptical of urgent, high-pressure requests to transfer money, buy gifts/assets, install unknown apps or turn over valuables to “collectors”.

- Restrict what personal content you publish publicly (voice clips, video, faces). The less available for scammers to use, the better.

- Monitor your accounts regularly for unusual activity.

- If you suspect you’re being targeted, report it early to your IT/security team or local police.

6. Is Deepfake Legal? What the Laws Say

As we mentioned, creating a deepfake itself isn’t always illegal, but using one to commit fraud, impersonate someone, or distribute non-consensual content often is. Here’s a quick snapshot:

Deepfakes that impersonate someone and trick them into giving money may fall under laws of fraud or identity theft.

Some jurisdictions are now specifically targeting deepfakes via new laws or amendments.

For example, Denmark is considering giving individuals copyright over their own image, face, voice and features so deepfakes of people without consent are easier to challenge.

Legal experts note that deepfakes present unique challenges because the “evidence” is synthetic, easily duplicated and often crossing borders making enforcement harder.

In short: yes, you should assume deepfakes may be illegal if they’re used to trick or harm someone. The laws are evolving, but they’re getting stricter.

7. Why This Matters to You

- If you’re an executive: one deepfake voice or video could lead to a fraudulent transfer, loss of company assets or damage to reputation.

- If you’re a professional: clients may trust you with sensitive data; if you’re tricked by a deepfake, that trust can be broken.

- If you’re an older adult: you may be emotionally targeted. The deepfake doesn’t need to be perfect, it just needs to be believable enough.

- For everyone: the technology is getting cheaper and easier to use. A scam can now be produced with minimal resources but still seem real.

When a tool that used to require specialists is now widely available, then the risk increases.

Stay vigilant. Stay sceptical. And remember: technology can make something look real, but trusting what you see/hear without verification is where the risk lies.

Deepfake Awareness Q&A

Q1: What is a deepfake?

A deepfake is a digitally altered video, photo, or audio created using artificial intelligence (AI) to make it appear that someone did or said something they never actually did.

The word combines “deep learning” (a type of AI) and “fake.” It’s often made with tools like DeepFaceLab, which can swap faces or clone voices to produce realistic results.

Q2: Why are deepfakes dangerous?

Because deepfakes can manipulate trust. Scammers can impersonate CEOs, family members, or officials to trick victims into transferring money, revealing sensitive data, or making urgent business decisions.

Executives and older adults are common targets because they often hold authority or savings that scammers want to exploit.

Q3: How do scammers use deepfakes?

- Business scams: Impersonating a company leader’s voice or face to approve fake fund transfers.

- Government or police scams: Using fake video calls to claim victims are under investigation.

- Family emergency scams: Mimicking a loved one’s voice asking for help or money.

- Romance or investment scams: Using AI-generated photos or videos to gain trust.

Q4: How can I detect a deepfake?

- Watch and listen carefully because deepfakes often have small clues:

- Lip movements or speech don’t perfectly match.

- Lighting or shadows seem unnatural.

- The voice may sound robotic or slightly off.

- The person makes unusual or urgent requests. If unsure, verify through official channels or call the person directly on a trusted number before acting.

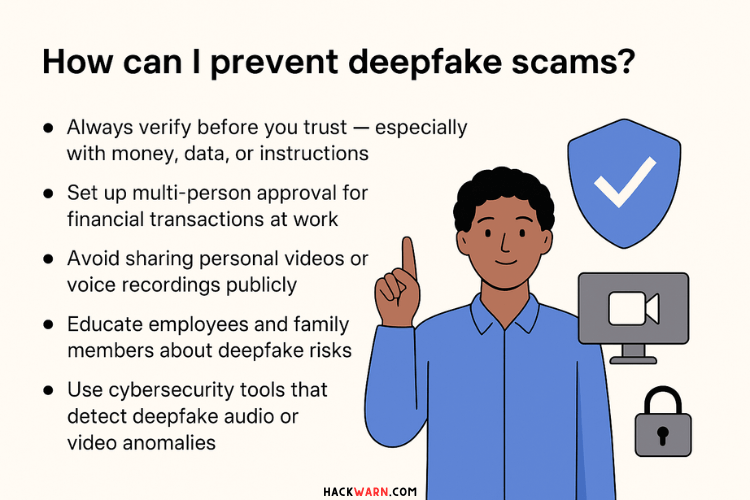

Q5: How can I prevent deepfake scams?

- Always verify before you trust especially with money, data, or instructions.

- Set up multi-person approval for financial transactions at work.

- Avoid sharing personal videos or voice recordings publicly.

- Educate employees and family members about deepfake risks.

- Use cybersecurity tools that detect deepfake audio or video anomalies.

Q6: Is deepfake legal?

Creating a deepfake is not automatically illegal but using it to deceive, impersonate, or defraud someone usually breaks the law.

Many countries, including the US, UK, Singapore and Malaysia are drafting or enforcing deepfake-specific legislation to combat misuse and protect victims.

Q7: How much money have deepfake scams caused in losses?

Recent reports show that deepfake-related frauds caused over US$200 million in losses in early 2025, with average corporate losses around US$280,000 per incident.

Q8: What should I do if I suspect a deepfake scam?

- Stop and verify the source immediately.

- Do not send money or share information based on calls, videos or messages that feel suspicious.

- Report the incident to the police or your national cybercrime center.

- Inform your organisation’s IT or security department if it involves company communication.

Q9: Can deepfakes be detected automatically?

Yes. The new AI-powered deepfake detection tools are emerging to analyse facial inconsistencies, audio frequencies and video patterns.

However, human vigilance is still the best defense. Always trust your instincts. If something feels “off,” pause and verify before reacting.

5 thoughts on “The Rise of Deepfake Scams: What They Are and How to Detect and Prevent AI-Powered Fraud”